Almost every enterprise today is experimenting with AI-generated content. But few organisations are confident enough to rely on it without human oversight. The main reason is the AI trust gap – the disconnect between how quickly AI can produce content and how reliably teams can validate it.

Due to the trust issue, 99% of C-suite leaders now demand content guardrails before allowing AI deeper access into their organisations[1].

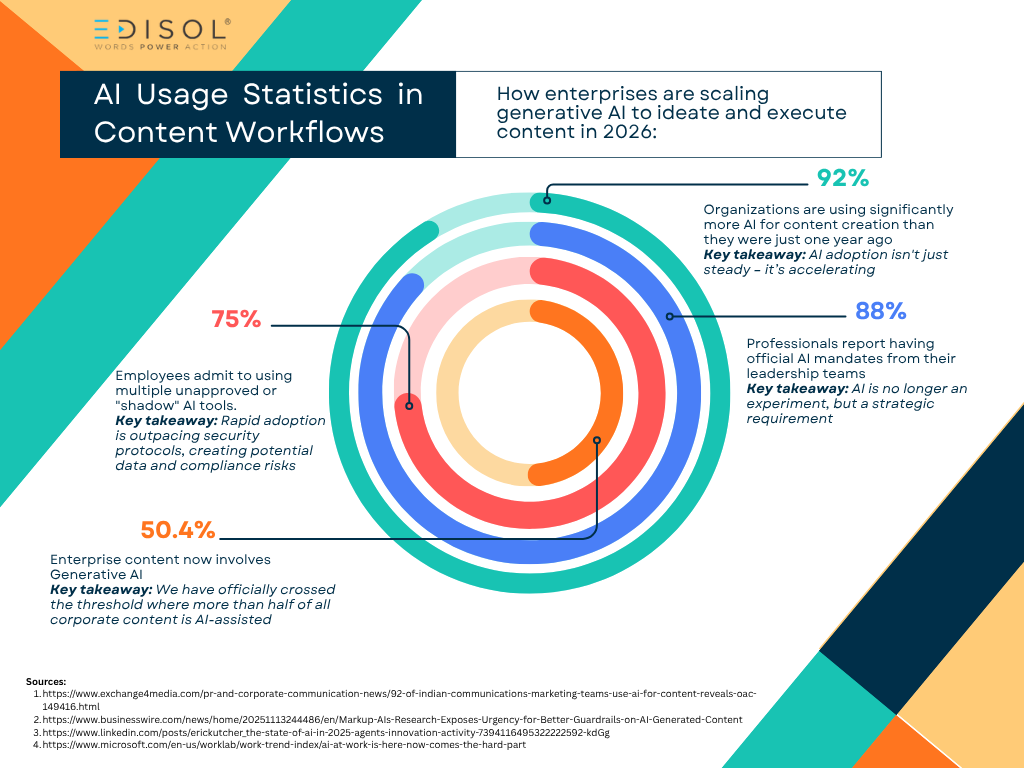

The Popularity of AI Usage in Content Workflow

Enterprises are incorporating generative AI to ideate and execute their content, and usage is growing more rapidly each passing year[2]. When measured, here’s what it looks like:

The ease of using AI may increase these numbers more in coming years. But it also makes consistency, compliance, and accuracy increasingly difficult to maintain – especially given that ‘shadow AI’ (unauthorised use of AI among employees) is now common.

The Consequences of Unmonitored AI

Leaders are becoming more aware of the consequences of using unchecked AI outputs. In fact, 57% of respondents of the Markup AI survey say organisations already face moderate to high risk from unsafe AI-generated content[3]. Their main concerns are:

Regulatory Violations

Refers to situations where AI-generated content breaks laws or compliance rules that apply to your industry.

For example:

-

Making unapproved claims about a medical product

-

Violating financial disclosure rules by sharing incorrect investment information

-

Not following data-privacy regulations (like GDPR) when generating content

-

Sharing content that doesn’t meet advertising standards or legal disclaimers

Regulators can impose fines, legal action, or sanctions if a company publishes AI content that violates rules.

Intellectual Property and Copyright Issues

Refers to when AI creates content that accidentally copies, mimics, or reproduces someone else’s protected work.

For example:

- Rewriting text too closely from a copyrighted article

- Generating images that resemble existing artwork or brand assets

- Using trademarked terms or slogans without permission

- Producing content built on copyrighted datasets that the company doesn’t own

IP violations lead to lawsuits and financial penalties, and can ultimately damage relationships with creators or partners.

Inaccurate or Misleading Information

Refers to when AI produces content that appears confident and polished but is factually or only partially correct.

For example:

- Incorrect statistics or dates

- Incorrect product descriptions

- Invented facts

- Misleading instructions or claims

Misinformation can damage customer trust, misguide internal teams, and create liability issues.

Brand Misalignment or Inconsistent Tone

AI usually writes in different styles depending on prompts. It can unintentionally shift away from the company’s brand identity. The inconsistency can weaken brand identity.

For example:

- Tone switching between casual, formal, or friendly

- Content using phrases or attitudes that don’t match brand values

- Messaging that doesn’t align with the brand voice and tonality

- Off-brand language or culturally insensitive wording

Due to these risk factors, many enterprises trust AI in theory but not in execution. Despite growing usage of AI tools, leaders’ actions tell a different story[4]. Check out the following:

- 97% of organisations believe AI can check its own work

- Yet 80% still rely on manual reviews or checking the content on the spot

- Only 33% consider their AI content guardrails strong and consistently applied

Human reviewers or content creators are often the final gatekeepers who produce a content strategy that aligns with the brand’s visual identity and voice.

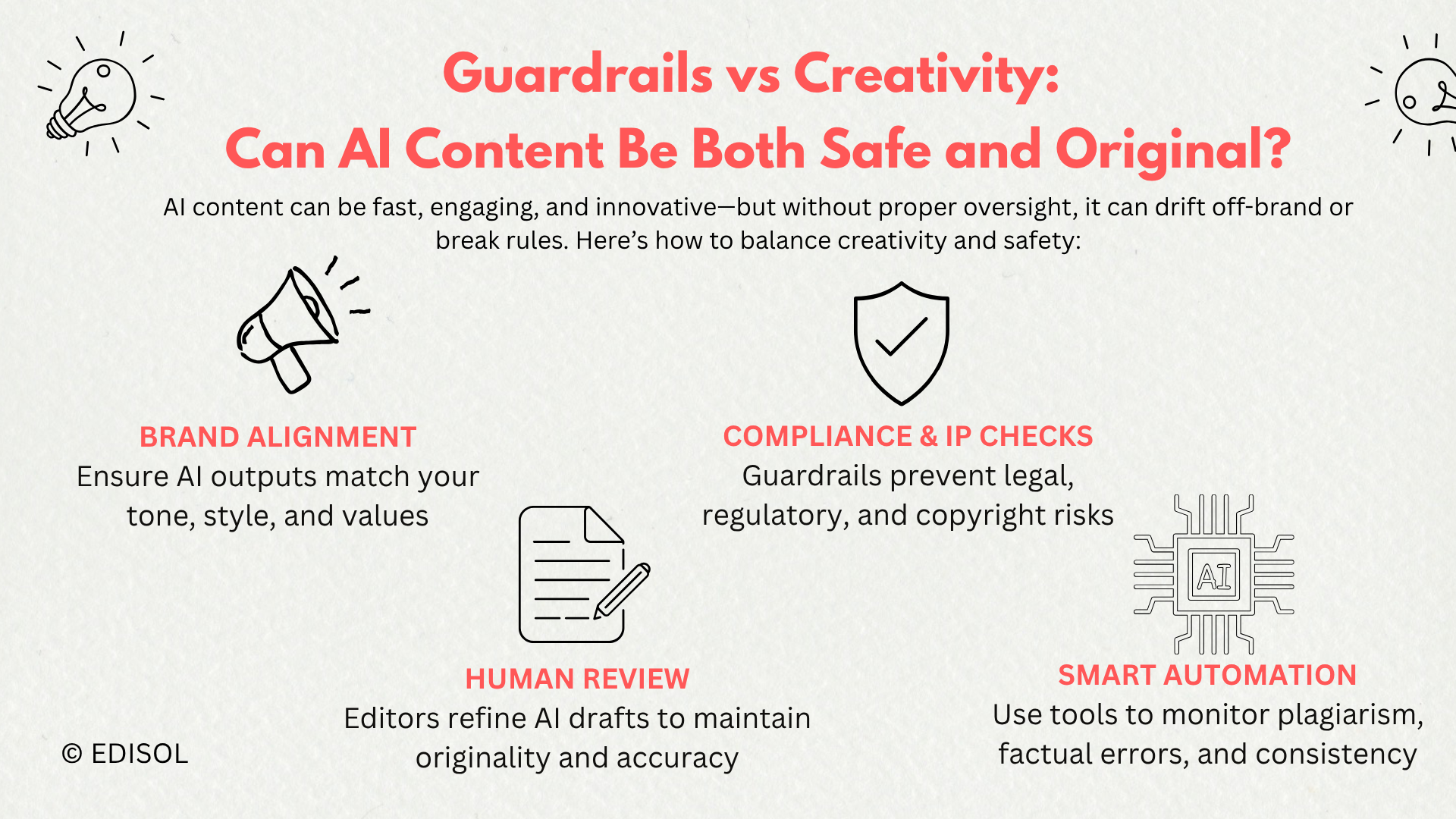

The Strategic Importance of Guardrails

C-suite leaders understand that AI alone cannot audit its own output. To produce content safely, enterprises need guardrails that ensure:

- Accuracy: Reducing hallucinations and misinformation

- Compliance: Preventing regulatory or legal breaches

- Brand Protection: Maintaining tone, terminology, and values

- Consistency: Upholding content standards across teams and tools

The Solution of Closing the AI Trust Gap

To maintain your brand’s credibility, your company needs a safety system for your brand’s content. A professional content agency with experienced senior editors can:

- Scan, evaluate, and refine generated content to close the link between content strategy and management

- Ensure every published content piece is safe and reliable – based on editorial review, fact‑check, and consistency

- Integrate oversight within existing marketing workflows, so content creation, editing, approval, and publishing happen smoothly without extra chaos

- Follow the E-E-A-T strategy for each piece of content

Conclusion

The companies winning with AI are not the ones generating the most content. They’re the ones generating the most trustworthy content.

Content guardrails do not ruin the creativity or uniqueness of content. They make sure it passes every level of check and satisfies user intent. With full‑stack content marketing services, Edisol helps enterprises not only create content but manage it with accountability, clarity, and control.

Ready to upgrade from risky auto‑content to trusted brand storytelling? Contact us and get started today.

Frequently Asked Questions

Why do C-suite leaders demand content guardrails now?

Rapid AI adoption has increased risks like misinformation, regulatory violations, IP issues, and brand inconsistency. Content guardrails ensure AI content is safe, reliable, and on-brand.

How does a guardrail system help close the AI trust gap?

A content guardrail system validates content for accuracy, compliance, IP safety, and brand consistency. This helps reduce risk and increase confidence in publishing content generated by AI.

What are content guardrails in practice?

They are checks, systems, and workflows that review AI-generated content for accuracy, compliance, IP safety, and brand alignment before it goes live.

What factors contribute to trustworthy AI content?

Trustworthy AI content often depends on a combination of human review, editorial guidance, compliance checks, and brand-aligned guardrails. However, results can vary by organisation and process.

References

[1] https://markup.ai/wp-content/uploads/2025/11/Markup-AI-Survey-Report.pdf

[2]https://markup.ai/wp-content/uploads/2025/11/Markup-AI-Survey-Report.pdf

[4]https://markup.ai/wp-content/uploads/2025/11/Markup-AI-Survey-Report.pdf